In model-free RL actions or stimuli are directly reinforced by subsequent delivery of reward while in model-based learning actions and stimuli are reinforced in a manner that reflects knowledge of task structure. There has also been particular interest in two classes of RL often referred to as model-free and model-based 16 but their underlying neural mechanisms are less well understood. The majority of research to date has focused on the PE at the time of outcome, present in dopamine neurons in the ventral tegmental area (VTA) and its projection area ventral striatum 1, 2, 3, 4, and shown they satisfy PE axioms 5, 6, reflect task context and task demands 7, 8, 9, 10, 11, signal value and identity deviations 12, 13 as well as social information 14, 15. Consequently, two neural representations are of interest: the encoding of the expected reward at the time of the stimulus, and the deviation from the expected outcome, or prediction error (PE), at the time of the outcome. In most studies of reward-based learning, one or several stimuli are associated with varying amounts of reward and their values have to be inferred and updated over time. Reinforcement learning (RL) provides a framework for formally studying learning and has inspired a major reconceptualization of its underlying neural mechanisms. The ability to learn about the consequences of our actions is critical for survival.

In summary, representations of task knowledge are derived via multiple learning processes operating at different time scales that are associated with partially overlapping and partially specialized anatomical regions.

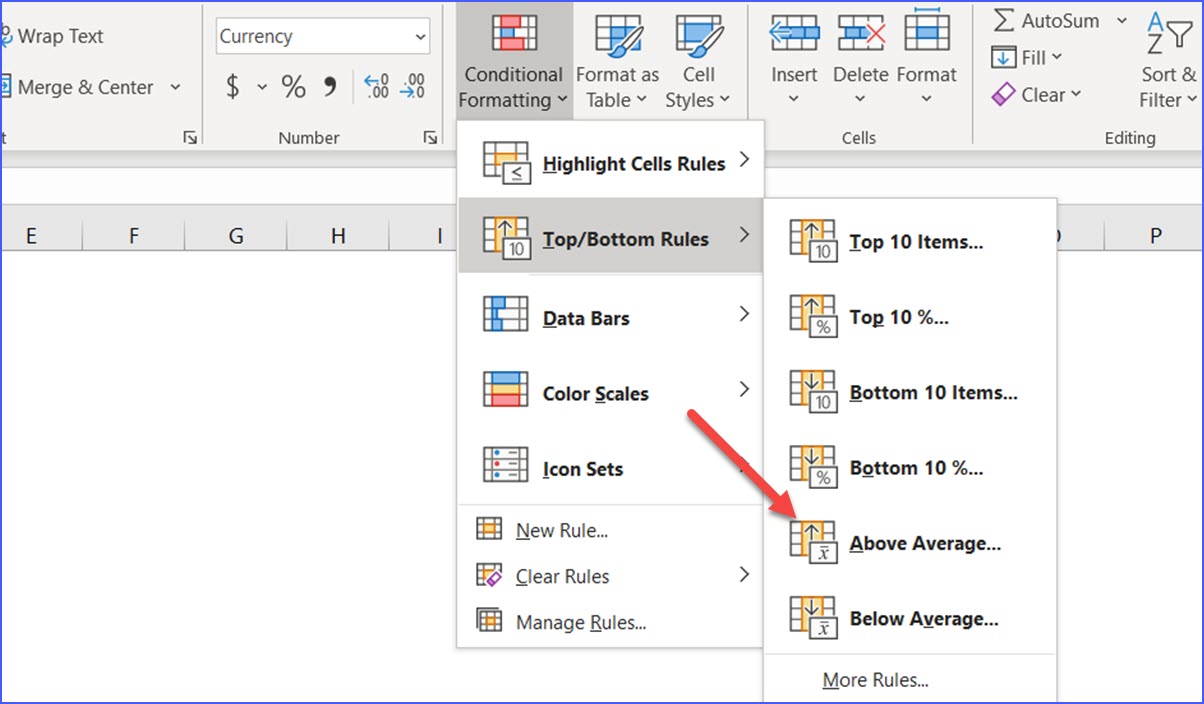

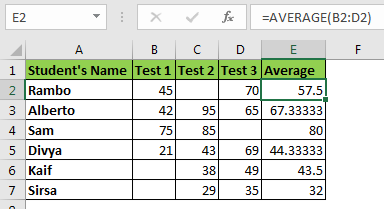

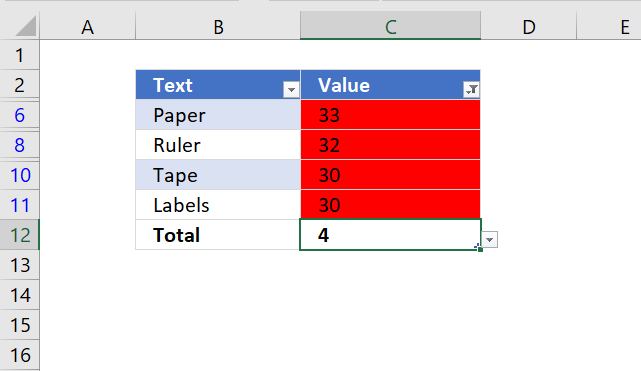

#CONDITIONAL FORMATTING EXCEL 2016 BOLD AVERAGE OR ABOVE FULL#

Intriguingly, ventral striatum encodes prediction error responses but not the full RL- or statistically derived task knowledge. By contrast, medial prefrontal cortex and a hippocampal-amygdala border region carry reward-related knowledge but also flexible statistical knowledge of the currently relevant task model.

BOLD neuroimaging in human volunteers demonstrates rigid representations of rewarded sequences in temporal pole and posterior orbito-frontal cortex, which are constructed backwards from reward. Here we contrast associative structures formed through reinforcement and experience of task statistics. Reinforcement learning theory has provided insight into how prediction errors drive updates in beliefs but less attention has been paid to the knowledge resulting from such learning. Learning the structure of the world can be driven by reinforcement but also occurs incidentally through experience.